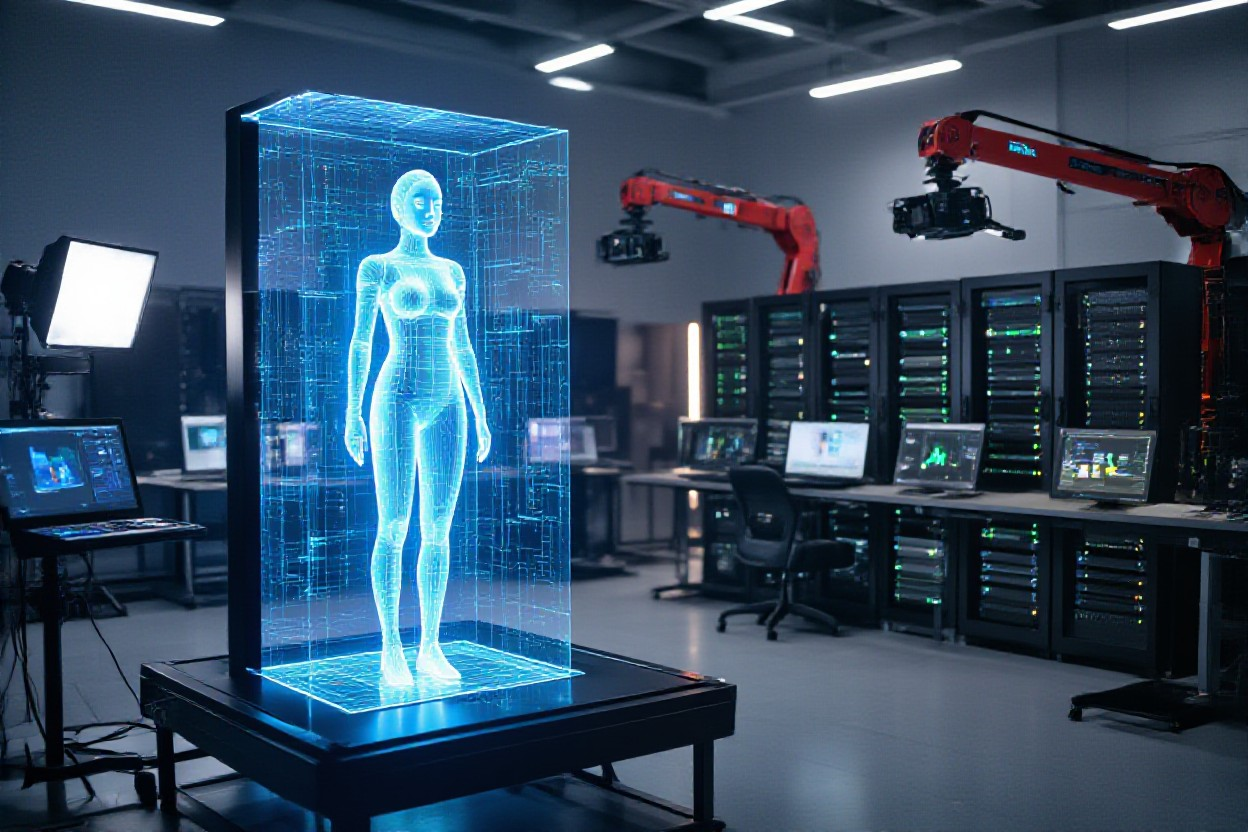

Have you ever wonder about The Technology Behind Modern Virtual Influencers? Over the past few years, you’ve seen virtual influencers evolve as powerful brand tools built on photorealistic image/video generation, custom avatar pipelines, lip-sync and motion transfer, and automated posting/monetization workflows; these systems combine GANs and diffusion models, LoRA-style fine-tuning, synthetic data pipelines, and APIs to help you scale your persona consistently across platforms while retaining control over identity, rights, and campaign metrics.

Key Takeaways:

- Photoreal generation: advanced generative models and neural rendering create ultra-real faces/bodies, with fine-tuning (LoRA) and digital-twin workflows for persona consistency.

- Audio‑visual synthesis: lip-sync, multilingual dubbing, and AI videography enable script‑to‑video outputs and realistic motion for short‑form and long‑form content.

- End‑to‑end platforms: integrated tools (creation, API, auto‑posting, analytics, monetization) let brands scale influencer lifecycles programmatically.

- Automated photoshoots and scene generation: templateable 4K scenes, outfit swaps, and batch variations speed campaign production and visual experimentation.

- Governance and commercial tooling: built‑in licensing, disclosure workflows, moderation, and revenue features support brand safety and legal/commercial reuse.

Core AI Models and Architectures

You rely on a layered architecture: latent diffusion and GAN hybrids for high-fidelity stills, transformer and temporal-attention modules for motion, and LoRA-style fine-tuning to lock persona attributes. In production, LoRA with 50-200 reference images is a common way to preserve identity across shoots, while platforms like Influencer Studio and SynthLife chain model outputs into API pipelines so your images and videos feed posting schedules automatically.

Generative image models (diffusion, GANs)

In image pipelines you typically use latent diffusion for photoreal texture and GAN components for high-resolution face detail and speed; classifier-free guidance sharpens outputs and LoRA or fine-tuned checkpoints embed a consistent persona. Influencer Farm and ZenCreator push 4K scenes and batch variations for campaign shoots, and SynthLife’s cloning plus viral-template tools let you reuse facial attributes and outfit themes across feeds.

Video and temporal models (text-to-video, frame consistency)

For video, temporal diffusion and transformer-based text-to-video nets condition each frame on prior context to reduce flicker and target social formats at ~24-30 fps; integrated lip-sync from Sozee and HeyGen lets your virtual talent deliver dialogue and scripted short-form clips with consistent motion and expressions.

Digging deeper, you enforce coherence with optical-flow warping, keyframe conditioning, and temporal-attention or recurrent modules, plus a temporal-consistency loss trained on multi-frame datasets to cut frame jitter. When identity matters, 3D-aware priors (NeRF or 3DMM) or mocap retargeting are fused with identity adapters so your face and body remain stable across camera moves. Evaluate with FVD and lip-sync error metrics; in practice Sozee scales hundreds-to-thousands of short clips, HeyGen handles script-to-video and multilingual sync, and Virbo/D-ID provide large-scale dubbing and avatar APIs for global campaigns.

Appearance and Identity: 3D, Rendering, and Stylization

You combine photoreal pipelines and stylized art direction to lock down an influencer’s look-meshes, PBR textures, hair cards and a stylization layer that can shift between hyper-real and branded cartoon. Platforms like Influencer Studio and SynthLife give you LoRA training and consistent persona outputs for feeds, while Influencer Farm generates campaign-ready 4K scenes/outfits; this lets you maintain identity across ads, short-form videos, and monetized templates without rebuilding models each time.

Neural rendering and photoreal 3D heads/bodies

NeRFs and neural texture maps now sit alongside classic morphable models, so you can create photoreal heads from thousands of frames and render coherent expressions at 30-60 fps for short-form video. Influencer Studio’s LoRA workflows and HeyGen’s digital-twin lip-sync show how you can fine-tune identity with targeted datasets, producing consistent faces/bodies across image and video pipelines while retaining control over age, gaze, and lighting.

Texture, wardrobe generation, and style transfer

You use diffusion and GAN-based generators to produce 4K albedo, normal and roughness maps, then apply CLIP-guided style transfer or LoRA fine-tunes for brand-consistent looks. Influencer Farm’s virtual photoshoots and SynthLife’s viral templates let you generate themed outfits and batch variations quickly, so you can spin up campaign-specific wardrobes and preserve material realism for hero shots or social thumbnails.

In practice the pipeline starts with a base mesh and UV unwrap, then you generate 4K (sometimes 8K for hero assets) texture maps via conditioned diffusion models and procedural brushes; normal and roughness maps are validated in a PBR renderer (Unreal/Blender). Cloth is either procedurally parameterized or simulated in Marvelous Designer, then combined with style-transfer LoRAs to enforce a brand palette. APIs from SynthLife or Influencer Farm let you batch-generate 10+ outfit variations per shoot and push them to auto-post schedules, so you can scale lookbooks while keeping material fidelity and persona consistency.

Motion, Speech, and Behavioral Animation

When you stitch together speech, motion, and behavior for a virtual influencer, you rely on both offline synthesis and near‑real‑time pipelines that feed Instagram/TikTok-ready clips. Platforms in 2026-like SynthLife for full lifecycle management and Influencer Studio for LoRA-tuned consistency-let you produce campaign sequences and auto-post short-form videos (15-60s) while maintaining persona continuity across feeds and ad spots.

Speech-to-animation, lip‑sync, and viseme modeling

You map phonemes to visemes using neural aligners and TTS-driven timing, then refine with per-frame corrective layers so lip motion matches expression depth. Tools such as HeyGen and D‑ID provide multilingual lip-sync, Virbo supports 80+ languages and 350+ avatars for templated dubbing, and Sozee combines AI videography with lip-sync scaling so you can generate hundreds of spoken clips with consistent mouth shapes and eye-contact fixes.

Pose transfer, motion synthesis, and expression control

You use pose-transfer networks and motion priors to retarget actor or mocap sequences onto your character, blending IK/FK rigs with learned motion fields. Influencer Farm (hyperlush) excels at ultra-realistic scene generation and 4K outputs for fashion shoots, while SynthLife and Sozee let you synthesize gesture variations programmatically to match campaign briefs and themed outfits.

Under the hood you combine skeleton retargeting, diffusion or autoregressive motion models, and blendshape-based facial rigs (commonly 40-100 coefficients) to control subtle expressions. Real-world pipelines sample motion at 24-60 fps, apply physics-aware constraints for foot contact and secondary motion, and expose high-level controls-mood, energy, gaze-that let you generate A/B variants rapidly for split-testing and monetization workflows.

| Aspect | What It Is | How It’s Used for Virtual Influencers | Key Tools / Techniques |

|---|---|---|---|

| 3D modeling & CGI | Creation of the influencer’s body, face, clothing, and environments as 3D (or high-end 2D) assets. opic3d+5 | Artists build the avatar, then render images or videos for posts, stories, and ads; style can be photorealistic or stylized. opic3d+4 | Blender, Autodesk Maya, Cinema 4D, Unreal Engine / MetaHuman, other CGI pipelines. YouTubepalospublishing+2 |

| Motion capture & animation | Tracking human body and facial movement and retargeting it to the digital character. opic3d+6 | Enables natural walking, posing, dancing, and facial expressions; can be real time for streams or pre-recorded content. opic3d+6 | Markerless mocap with AI pose estimation, multi‑camera rigs, tools like MotionBuilder, facial capture via smartphones and specialized software. reelmind+3YouTube |

| Rendering & real-time engines | Software that turns 3D scenes into final images or videos, sometimes in real time. palospublishing+3 | Produces high‑quality posts, videos, and live appearances; real‑time rendering enables interactive streams and rapid content turnaround. palospublishing+3 | Unreal Engine, real‑time shaders, high‑end rendering tools for photorealistic lighting and skin. palospublishing+2 |

| Generative AI for visuals | AI models that create or enhance images, outfits, backgrounds, and even full scenes. palospublishing+3 | Used to design new looks, settings, and campaigns quickly, and to scale content production across platforms. palospublishing+3 | Image generators similar to Stable Diffusion or DALL‑E, AI upscaling, style transfer and pose‑guided generation. palospublishing+2 |

| Natural language processing (NLP) | Large language models that understand prompts and generate text. YouTubepalospublishing+3 | Write captions, comments, and DMs; maintain a consistent persona and tone across posts and languages. YouTubepalospublishing+3 | GPT‑style models (e.g., GPT‑4), fine‑tuned chatbots and sentiment analysis systems. YouTubepalospublishing+2 |

| AI behavior & personalization | Machine learning systems that learn from audience data and performance metrics. palospublishing+4 | Optimize posting times, content themes, and responses; adapt the influencer’s “personality” and topics to maximize engagement. palospublishing+4 | Recommendation and analytics models, engagement prediction, A/B testing, audience segmentation algorithms. palospublishing+4 |

| Voice synthesis | Deep‑learning models that generate synthetic speech. YouTubepalospublishing+2 | Give the influencer a recognizable voice for videos, streams, podcasts, and interactive experiences. YouTubepalospublishing+2 | Voice cloning and TTS platforms such as Synthesia‑style services and Overdub‑like tools. YouTubepalospublishing |

| Social media automation | Tools that schedule posts, manage multi‑platform presence, and automate replies. YouTubenewmanwebsolutions+3 | Maintain a 24/7 presence, cross‑post content, and handle routine engagement at scale. YouTubenewmanwebsolutions+3 | Platforms like Buffer and Hootsuite, plus custom automation and chat workflows. YouTubenewmanwebsolutions+1 |

| Data analytics & strategy | Tracking performance metrics (reach, CTR, sentiment, demographics). newmanwebsolutions+3 | Guide creative direction and campaign strategy, making virtual influencers more effective for brands. newmanwebsolutions+3 | Analytics dashboards, social listening, trend detection and optimization models. newmanwebsolutions+3 |

Personalization, Consistency, and Scale

You stitch together LoRA-tuned personalities, templated scenes, and scheduled posting to keep your persona consistent across 1k+ posts; SynthLife and Influencer Studio let you auto-post to Instagram/TikTok and monetize while Influencer Farm produces 4K campaign images in minutes and Sozee scales lip-sync videos for thousands of localized clips. At scale you monitor identity drift with face-embedding similarity thresholds and automated style checks to safeguard your brand voice.

Fine‑tuning approaches (LoRA, embeddings) for persona fidelity

You use LoRA adapters (rank 4-32) to inject persona-specific weights with as few as 50-200 images, cutting GPU time and storage versus full-model retraining. Embeddings (typically 768-1,024 dims) encode recurring props, phrases, and tonal cues for retrieval-augmented prompts. Influencer Studio and SynthLife expose LoRA training and embedding APIs so you can iterate personas, A/B adapters, or rollback to a previous style in minutes.

Asset/version management and automated variation pipelines

You implement a single source of truth for 3D models, textures, and generated clips with semantic tags and version hashes; ZenCreator and SynthLife support batch variations and programmatic APIs to produce thousands of lookbook or feed images. Automated pipelines apply outfit swaps, color grading, and format transcodes, then push A/B batches to platforms so engagement metrics guide the next creative iteration.

For operations you tie artifact stores (S3/DVC) to CI pipelines that run identity-preservation checks-face-embedding cosine similarity >0.8 or LPIPS <0.15-before approving assets. You tag versions with semantic metadata (campaign, mood, locale) and run parametrized jobs that vary scene, pose, and lighting; InfluencerFarm can render dozens of 4K variants while SynthLife auto-posts approved sets, reducing manual QA hours and preserving legal/commercial metadata per asset.

Production Tooling and Deployment

Real‑time engines, compositing, and post‑production workflows

You push real‑time engines like Unreal or Unity to run photoreal renders, live compositing and MoCap pipelines so you ship content faster; studios pair those engines with NLEs and DaVinci/Fusion-style compositors to polish color and hair‑specular passes. Influencer Farm’s 4K scene generation and Sozee’s AI videography speed you from capture to publish, while latency targets under 100 ms keep lip‑sync and motion feeling natural for live or streamed drops.

APIs, automation, and integration with social platforms

You rely on platform APIs and automation to reach feeds at scale: SynthLife’s auto‑posting and monetization hooks, Influencer Studio’s REST API for LoRA‑trained persona variants, and D‑ID or HeyGen endpoints for script‑to‑video let you schedule, A/B test, and localize short‑form content across Instagram, TikTok and YouTube. Virbo’s 350+ avatars and 80+ languages illustrate how programmatic pipelines unlock global campaigns.

You implement webhooks, OAuth and REST calls to automate rendering, metadata, and posting-streaming RTMP for live appearances and using batch jobs to create tens to hundreds of asset variants for campaigns. For example, you can trigger Sozee to render 30 lip‑synced clips, call Influencer Studio to apply a LoRA persona, then have SynthLife auto‑post and route analytics back into your dashboard, preserving disclosure and commercial rights throughout the workflow.

Ethics, Rights, and Governance

You should treat virtual influencer programs like IP-first products: assign clear ownership, log consents, and bake governance into pipelines so platforms such as SynthLife (monetization tools, cloning) and Influencer Studio (LoRA training, API access) can prove provenance for campaigns on Instagram/TikTok; enforce contract clauses for dataset sourcing, maintain audit logs for monetization, and map obligations to GDPR, CPRA and the emerging EU AI Act to reduce legal risk across markets.

Likeness/IP, consent, and attribution/disclosure practices

You must obtain explicit, auditable consent for any real-person training data and model-derived likenesses, use written model releases when cloning faces, and register clear licensing for generated personas; platforms like Influencer Farm (4K photoshoots) and SynthLife complicate attribution, so include disclosure clauses, visible sponsorship tags per FTC guidance, and machine-readable metadata to link posts back to permitted IP and paid partnerships.

Moderation, deepfake mitigation, and regulatory compliance

You should deploy layered defenses-automated watermarking, provenance metadata, and human review-to flag manipulative content from tools like HeyGen or D-ID, integrate platform APIs for takedown workflows, and keep compliance checklists for GDPR, CPRA and sector rules so moderators can act within documented SLA windows when disputes arise.

You can operationalize mitigation by embedding robust, resistant watermarks and cryptographic provenance (signed manifests) into each generated asset, using hash-based fingerprinting that survives recompression, and maintaining model cards plus dataset inventories for auditability; pair automated detectors with a human-in-the-loop team, use cross-platform takedown APIs, and log every content decision to demonstrate compliance to regulators or brand partners during investigations.

To wrap up

As a reminder, the technology behind modern virtual influencers combines photoreal image/video synthesis, advanced lip-sync and motion capture, LoRA-style persona training, and end-to-end tooling for posting, monetization, and rights management. You can deploy consistent, scalable digital personas across platforms using tools like Influencer Studio, SynthLife, Influencer Farm/hyperlush, and Sozee, while maintaining ethical disclosure and commercial controls that protect your brand and audience.

FAQ

Q: What core AI technologies power modern virtual influencers?

A: Modern virtual influencers are built on a mix of generative and neural-rendering techniques: diffusion models for high-fidelity text-to-image and image-to-image generation; neural radiance fields (NeRF) and neural rendering for consistent 3D-aware scenes; specialized temporal/video diffusion and transformer-based video models for frame-coherent motion; neural lip-sync and TTS models for spoken content; fine-tuning methods like LoRA or custom embeddings to lock a persona’s visual style; and control modules (pose/segmentation/ControlNet) to enforce composition, outfits, and camera angles. Pipelines combine these models with asset managers, facial rigs/blendshapes, and post-processing tools for color, grain, and stabilization.

Q: How do platforms keep a virtual influencer visually and behaviorally consistent across images, videos, and campaigns?

A: Platforms use identity-preserving artifacts: learned embeddings or identity LoRAs that encode face/body features, style tokens for wardrobe and lighting, and persona profiles that store language style, gesture patterns, and editorial rules. Generation pipelines enforce consistency by conditioning on reference frames, pose maps, and 3D proxies (NeRF/rigs) and by running batch renders with deterministic seeds or versioned model checkpoints. End-to-end systems (SynthLife, Influencer Studio) add content calendars, auto-posting, and brand templates so assets stay coherent across Instagram, TikTok, and paid ads.

Q: What methods synchronize lip movement, facial expression, and body motion with audio or scripts?

A: Synchronization is achieved with phoneme-to-viseme mapping and neural lip-sync networks that convert audio or TTS output into frame-aligned facial controllers. Motion-retargeting maps mocap or keyframe animation onto a character rig, while pose-conditioned video generators produce temporally consistent body movement. Hybrid pipelines combine mocap for complex gestures and learned temporal models for subtle micro-expressions and smoothing. Platforms like HeyGen and Sozee specialize in multilingual lip-sync, script-to-video, and AI videography to automate sync at scale.

Q: How do teams scale production and monetize virtual influencers across channels?

A: Scalability comes from template libraries, batch generation, APIs, and automation: reusable scene/outfit templates, viral-format video templates, automated captioning and localization, and scheduler integrations for cross-platform posting. Monetization features include affiliate links, product placement templates, branded campaign toolkits, and viewer commerce hooks. Tools such as SynthLife provide end-to-end growth and monetization workflows; Influencer Studio and InfluencerFarm/Hyperlush focus on consistent persona delivery and campaign-ready assets to speed agency workflows and ad production.

Q: What legal, ethical, and rights-management issues must be handled when deploying virtual influencers?

A: Key issues include disclosure (labeling synthetic content), image and voice rights (clear commercial licenses for model weights, training data, and any cloned voices), intellectual-property for outfits and brand assets, and compliance with platform and advertising rules. Provenance metadata, audit logs, and usage contracts help trace training data and license scopes. Many platforms embed consent workflows and brand-safe filters, and teams typically implement explicit disclosure tags and contractual protections before running commercial campaigns.

Comments

3 responses

[…] and potential cost efficiencies compared to traditional human influencer marketing. * The technology behind AI influencers combines AI image generation, 3D modeling, natural language processing for engagement, and […]

[…] these considerations, you can appreciate how Fanvue models reflect the zeitgeist of modern patronage: they merge direct creator-audience relationships, personalized content, and […]

[…] that brands and followers expect believable narratives; you respond to that by favoring AI influencers who blend flawless visuals with human‑style storytelling-Aurelia Luxford’s Fanvue photosets […]